AMD Radeon RX 9070 XT: Two-minute review

AMD had one job to do with the launch of its RDNA 4 graphics cards, spearheaded by the AMD Radeon RX 9070 XT, and that was to not get run over by Blackwell too badly this generation.

With the RX 9070 XT, not only did AMD manage to hold its own against the GeForce RTX monolith, it perfectly positions Team Red to take advantage of the growing discontent among gamers upset over Nvidia's latest GPUs with one of the best graphics cards I've ever tested.

The RX 9070 XT is without question the most powerful consumer graphics card AMD's put out, beating the AMD Radeon RX 7900 XTX overall and coming within inches of the Nvidia GeForce RTX 4080 in 4K and 1440p gaming performance.

It does so with an MSRP of just $599 (about £510 / AU$870), which is substantially lower than those two card's MSRP, much less their asking price online right now. This matters because AMD traditionally hasn't faced the kind of scalping and price inflation that Nvidia's GPUs experience (it does happen, obviously, but not nearly to the same extent as with Nvidia's RTX cards).

That means, ultimately, that gamers who look at the GPU market and find empty shelves, extremely distorted prices, and uninspiring performance for the price they're being asked to pay have an alternative that will likely stay within reach, even if price inflation keeps it above AMD's MSRP.

The RX 9070 XT's performance comes at a bit of a cost though, such as the 309W maximum power draw I saw during my testing, but at this tier of performance, this actually isn't that bad.

This card also isn't too great when it comes to non-raster creative performance and AI compute, but no one is looking to buy this card for its creative or AI performance, as Nvidia already has those categories on lock. No, this is a card for gamers out there, and for that, you just won't find a better one at this price. Even if the price does get hit with inflation, it'll still likely be way lower than what you'd have to pay for an RX 7900 XTX or RTX 4080 (assuming you can find them at this point) making the AMD Radeon RX 9070 XT a gaming GPU that everyone can appreciate and maybe even buy.

AMD Radeon RX 9070 XT: Price & availability

- How much is it? MSRP is $599 (about £510 / AU$870)

- When can you get it? The RX 9070 XT goes on sale March 6, 2025

- Where is it available? The RX 9070 XT will be available in the US, UK, and Australia at launch

The AMD Radeon RX 9070 XT is available as of March 6, 2025, starting at $599 (about £510 / AU$870) for reference-spec third-party cards from manufacturers like Asus, Sapphire, Gigabyte, and others, with OC versions and those with added accoutrements like fancy cooling and RGB lighting likely selling for higher than MSRP.

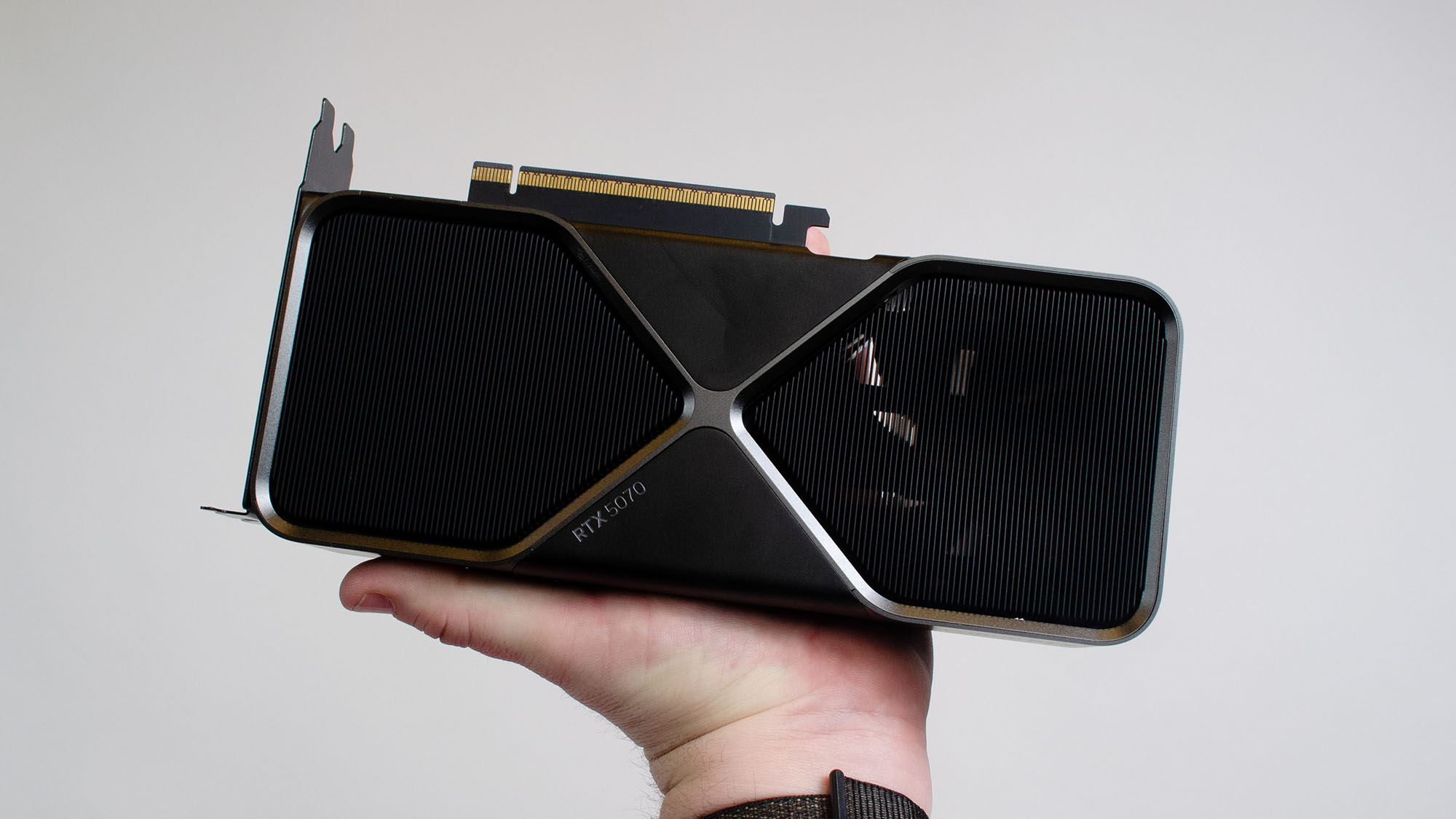

At this price, the RX 9070 XT comes in about $150 cheaper than the RTX 5070 Ti, and about $50 more expensive than the RTX 5070 and the AMD Radeon RX 9070, which also launches alongside the RX 9070 XT. This price also puts the RX 9070 XT on par with the MSRP of the RTX 4070 Super, though this card is getting harder to find nowadays.

While I'll dig into performance in a bit, given the MSRP (and the reasonable hope that this card will be findable at MSRP in some capacity) the RX 9070 XT's value proposition is second only to the RTX 5070 Ti's, if you're going by its MSRP. Since price inflation on the RTX 5070 Ti will persist for some time at least, in many cases you'll likely find the RX 9070 XT offers better performance per price paid of any enthusiast card on the market right now.

- Value: 5 / 5

AMD Radeon RX 9070 XT: Specs

- PCIe 5.0, but still just GDDR6

- Hefty power draw

The AMD Radeon RX 9070 XT is the first RDNA 4 card to hit the market, and so its worth digging into its architecture for a bit.

The new architecture is built on TSMC's N4P node, the same as Nvidia Blackwell, and in a move away from AMD's MCM push with the last generation, the RDNA 4 GPU is a monolithic die.

As there's no direct predecessor for this card (or for the RX 9070, for that matter), there's not much that we can apples-to-apples compare the RX 9070 XT against, but I'm going to try, putting the RX 9070 XT roughly between the RX 7800 XT and the RX 7900 GRE if it had a last-gen equivalent.

The Navi 48 GPU in the RX 9070 XT sports 64 compute units, breaking down into 64 ray accelerators, 128 AI accelerators, and 64MB of L3 cache. Its cores are clocked at 1,600MHz to start, but can run as fast as 2,970MHz, just shy of the 3GHz mark.

It uses the same GDDR6 memory as the last-gen AMD cards, with a 256-bit bus and a 644.6GB/s memory bandwidth, which is definitely helpful in pushing out 4K frames quickly.

The TGP of the RX 9070 XT is 304W, which is a good bit higher than the RX 7900 GRE, though for that extra power, you do get a commensurate bump up in performance.

- Specs: 4 / 5

AMD Radeon RX 9070 XT: Design

- No AMD reference card

- High TGP means bigger coolers and more cables

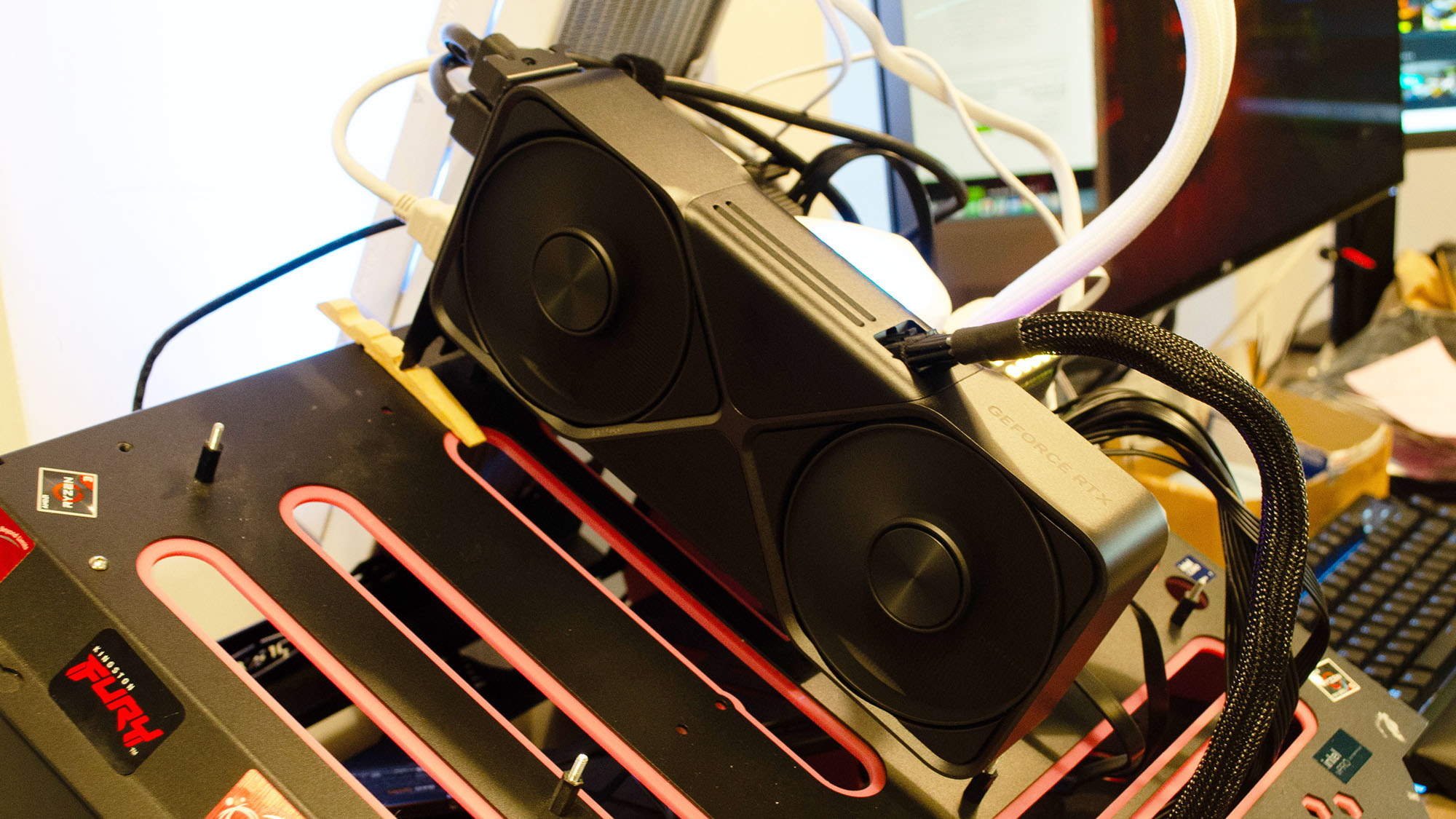

There's no AMD reference card for the Radeon RX 9070 XT, but the unit I got to test was the Sapphire Pulse Radeon RX 9070 XT, which I imagine is pretty indicative of what we can expect from the designs of the various third-party cards.

The 304W TGP all but ensures that any version of this card you find will be a triple-fan cooler over a pretty hefty heatsink, so it's not going to be a great option for small form factor cases.

Likewise, that TGP just puts it over the line where it needs a third 8-pin PCIe power connector, something that you may or may not have available in your rig, so keep that in mind. If you do have three spare power connectors, there's no question that cable management will almost certainly be a hassle as well.

After that, it's really just about aesthetics, as the RX 9070 XT (so far) doesn't have anything like the dual pass-through cooling solution of the RTX 5090 and RTX 5080, so it's really up to personal taste.

As for the card I reviewed, the Sapphire Pulse shroud and cooling setup on the RX 9070 XT was pretty plain, as far as desktop GPUs go, but if you're looking for a non-flashy look for your PC, it's a great-looking card.

- Design: 4 / 5

AMD Radeon RX 9070 XT: Performance

- Near-RTX 4080 levels of gaming performance, even with ray tracing

- Non-raster creative and AI performance lags behind Nvidia, as expected

- Likely the best value you're going to find anywhere near this price point

The charts shown below offer the most recent data I have for the cards tested for this review. They may change over time as more card results are added and cards are retested. The 'average of all cards tested' includes cards not shown in these charts for readability purposes.

Simply put, the AMD Radeon RX 9070 XT is the gaming graphics card that we've been clamoring for this entire generation. While it shows some strong performance in synthetics and raster-heavy creative tasks, gaming is where this card really shines, managing to come within 7% overall of the RTX 4080 and getting within 4% of the RTX 4080's overall gaming performance. For a card launching at half the price of the RTX 4080's launch price, this is a fantastic showing.

The RX 9070 XT is squaring up against the RTX 5070 Ti, however, and here the RTX 5070 Ti does manage to pull well ahead of the RX 9070 XT, but it's much closer than I thought it would be starting out.

On the synthetics side, the RX 9070 XT excels at rasterization workloads like 3DMark Steel Nomad, while the RTX 5070 Ti wins out in ray-traced workloads like 3DMark Speed Way, as expected, but AMD's 3rd generation ray accelerators have definitely come a long way in catching up with Nvidia's more sophisticated hardware.

Also, as expected, when it comes to creative workloads, the RX 9070 XT performs very well in raster-based tasks like photo editing, and worse at 3D modeling in Blender, which is heavily reliant on Nvidia's CUDA instruction set, giving Nvidia an all but permanent advantage there.

In video editing, the RX 9070 XT likewise lags behind, though it's still close enough to Nvidia's RTX 5070 Ti that video editors won't notice much difference, even if the difference is there on paper.

Gaming performance is what we're on about though, and here the sub-$600 GPU holds its own against heavy hitters like the RTX 4080, RTX 5070 Ti, and Radeon RX 7900 XTX.

In 1440p gaming, the RX 9070 XT is about 8.4% faster than the RTX 4070 Ti and RX 7900 XTX, just under 4% slower than the RTX 4080, and about 7% slower than the RTX 5070 Ti.

This strong performance carries over into 4K gaming as well, thanks to the RX 9070 XT's 16GB VRAM. Here, it's about 15.5% faster than the RTX 4070 Ti and about 2.5% faster than the RX 7900 XTX. Against the RTX 4080, the RX 9070 XT is just 3.5% slower, while it comes within 8% of the RTX 5070 Ti's 4K gaming performance.

When all is said and done, the RX 9070 XT doesn't quite overpower one of the best Nvidia graphics cards of the last-gen (and definitely doesn't topple the RTX 5070 Ti), but given its performance class, it's power draw, its heat output (which wasn't nearly as bad as the power draw might indicate), and most of all, it's price, the RX 9070 XT is easily the best value of any graphics card playing at 4K.

And given Nvidia's position with gamers right now, AMD has a real chance to win over some converts with this graphics card, and anyone looking for an outstanding 4K GPU absolutely needs to consider it before making their next upgrade.

- Performance: 5 / 5

Should you buy the AMD Radeon RX 9070 XT?

Buy the AMD Radeon RX 9070 XT if...

You want the best value proposition for a high-end graphics card

The performance of the RX 9070 XT punches way above its price point.

You don't want to pay inflated prices for an Nvidia GPU

Price inflation is wreaking havoc on the GPU market right now, but this card might fare better than Nvidia's RTX offerings.

Don't buy it if...

You're on a tight budget

If you don't have a lot of money to spend, this card is likely more than you need.

You need strong creative or AI performance

While AMD is getting better at creative and AI workloads, it still lags far behind Nvidia's competing offerings.

How I tested the AMD Radeon RX 9070 XT

- I spent about a week with the AMD Radeon RX 9070 XT

- I used my complete GPU testing suite to analyze the card's performance

- I tested the card in everyday, gaming, creative, and AI workload usage

Here are the specs on the system I used for testing:

Motherboard: ASRock Z790i Lightning WiFi

CPU: Intel Core i9-14900K

CPU Cooler: Gigabyte Auros Waterforce II 360 ICE

RAM: Corsair Dominator DDR5-6600 (2 x 16GB)

SSD: Crucial T705

PSU: Thermaltake Toughpower PF3 1050W Platinum

Case: Praxis Wetbench

I spent about a week with the AMD Radeon RX 9070 XT, which was spent benchmarking, using, and digging into the card's hardware to come to my assessment.

I used industry standard benchmark tools like 3DMark, Cyberpunk 2077, and Pugetbench for Creators to get comparable results with other competing graphics cards, all of while have been tested using the same testbench setup listed on the right.

I've reviewed more than 30 graphics cards in the last three years, and so I've got the experience and insight to help you find the best graphics card for your needs and budget.

- Originally reviewed March 2025