Wearable technology rides a fine line between utility and aesthetic pleasure. There's no question the small device can accomplish myriad tasks but if it looks bizarre on your body, it's destined for the trash heap. That's probably why I like Amazon Echo Frames (3rd Gen), the wearable smart glasses that by not trying to do too much don't overwhelm the essential glasses-ness of the frames.

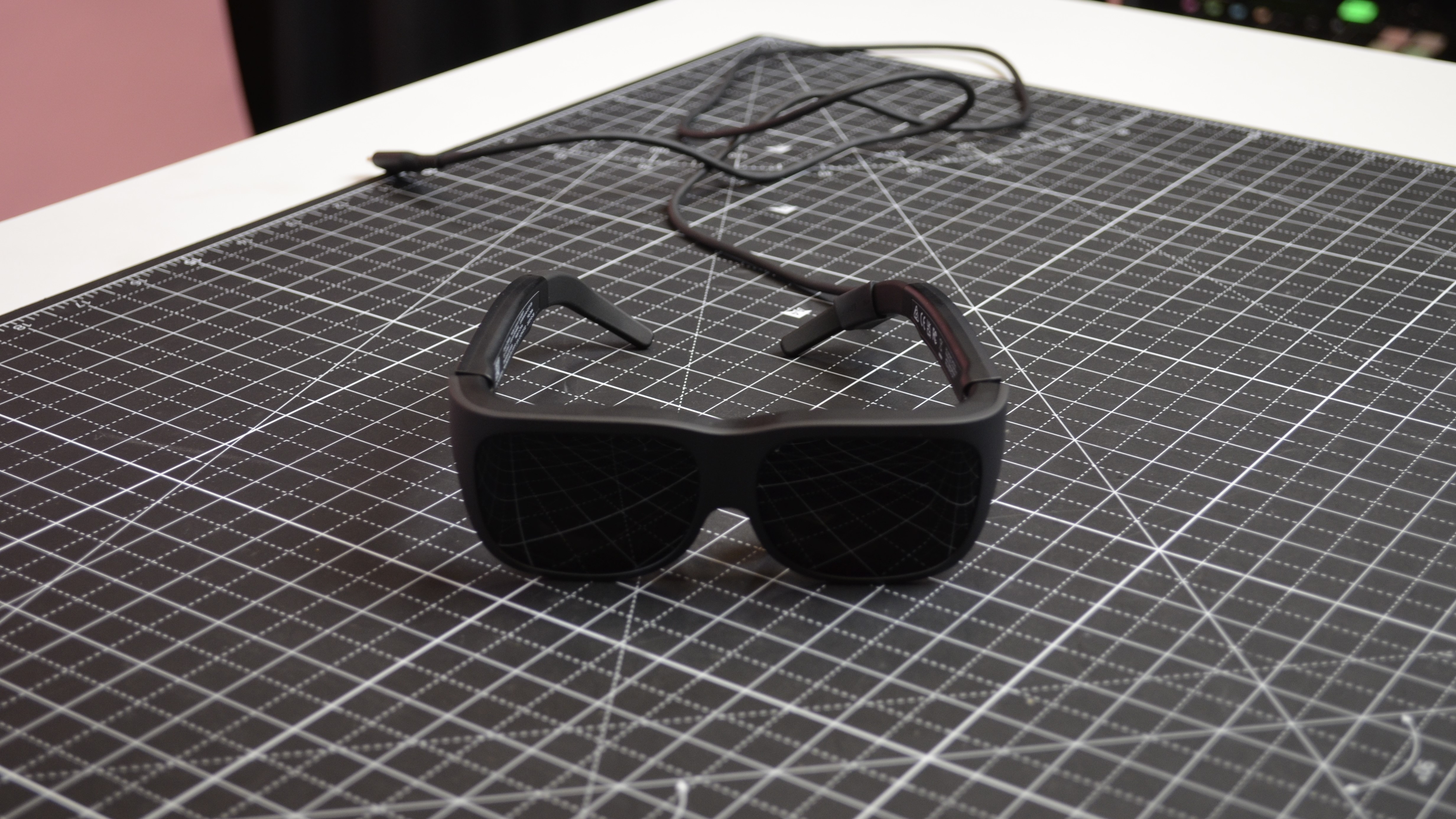

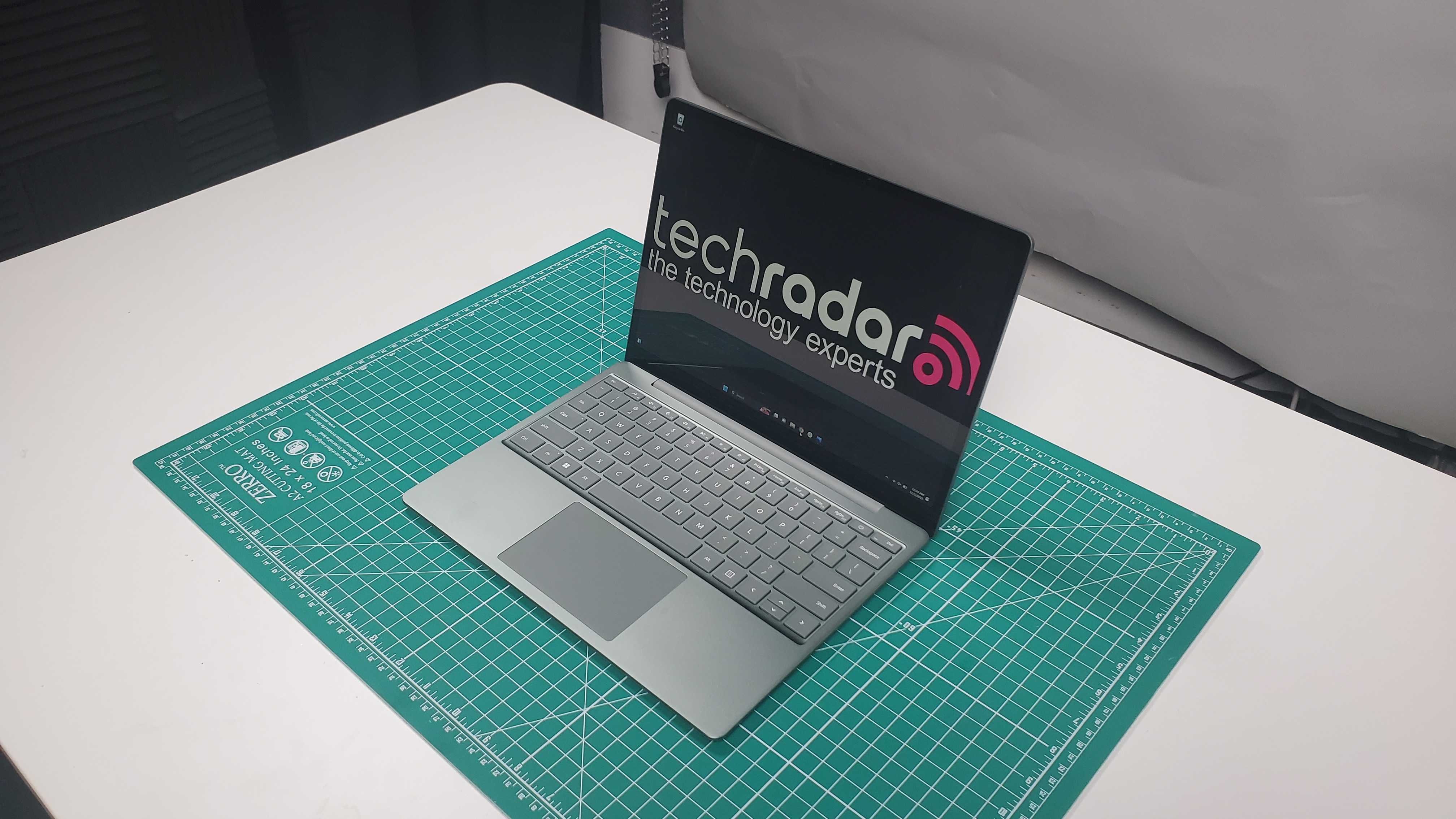

Amazon Echo Frames 3rd generation shows how Amazon's years of effort in crafting and fine-tuning the smart glasses design has paid off. From the front these look almost like regular (maybe slightly oversized) glasses. Even from the side, it's hard to tell that I'm wearing a smart companion on my face.

That, by the way, is what it's like to wear Amazon Echo Frames. They can be standard glasses but Alexa is always waiting in the wings – or beside your ears – ready to jump in with a song, a podcast, the audio from your phone, a notification, an answer to a question, or an action in your smart home. Wearing them is a little like having a secret superpower.

Design-wise, I ended up with the "Superman-style" Black Rectangle, which I believe most resembles my current frames. "Resemble" might be a generous word. They're noticeably larger and my wife couldn't decide if she loved or hated them on me. I wore them while walking across Manhattan and didn't get a single weird stare (or any more than I usually get). I was a little concerned that someone could hear my podcast leaking from the stem-based speakers but I didn't get any dirty looks, either.

One thing I did notice is that if I put a knit cap over my ears, the sound improved dramatically, a clear sign I am losing some audio to the environment.

The frames have a few buttons I can use to, for instance, pause audio and raise and lower volume but I found it was more efficient to ask Alexa to manage these tasks. I tried to not feel too self-conscious when people spotted me speaking to my Echo Frames. At least the microphones are sensitive enough that I can almost speak in sotto-voice to activate an Alexa command (provided I'm in a semi-quiet environment).

The lens I'm wearing will run you $269 (they're only available in the US for now) but there's currently a $75 discount for early adopters bringing the price down to $194.99. Either way. decent eyeglass frames can run you well over $200. If you also need prescription lenses, which these frames can hold, they might cost you another $200. Of course, you could also just opt for the sunglass models which start at $329.99. They also have an introductory price of $254.99

Setup is easy enough and pretty much matches what you'd do for any other Echo device. The Alexa app discovers the glasses, you pair them and then their part of your Alexa ecosystem. The only part of the setup that I did not like was charging up the glasses. Echo Frames ship with a custom charge platform and instead of, say, lining smartglasses charge pads or a port with the base, you fold the glasses and then insert the stems into the gap with the lenses facing up toward you. An indicator light lets you know if you got it right. It's a bit of a tight squeeze and I managed to get it wrong once and, naturally, the frames didn't charge. I worry consumers will make the same mistake.

One caveat about my review. I have not had them long enough to get prescription lenses for my Echo Frames, so I could not wear them as often as I do my regular glasses. But I did my best to work with them, commute with them, and wear them when I didn't need to see where I was going.

Amazon Echo Frames (3rd Gen): Price and availability

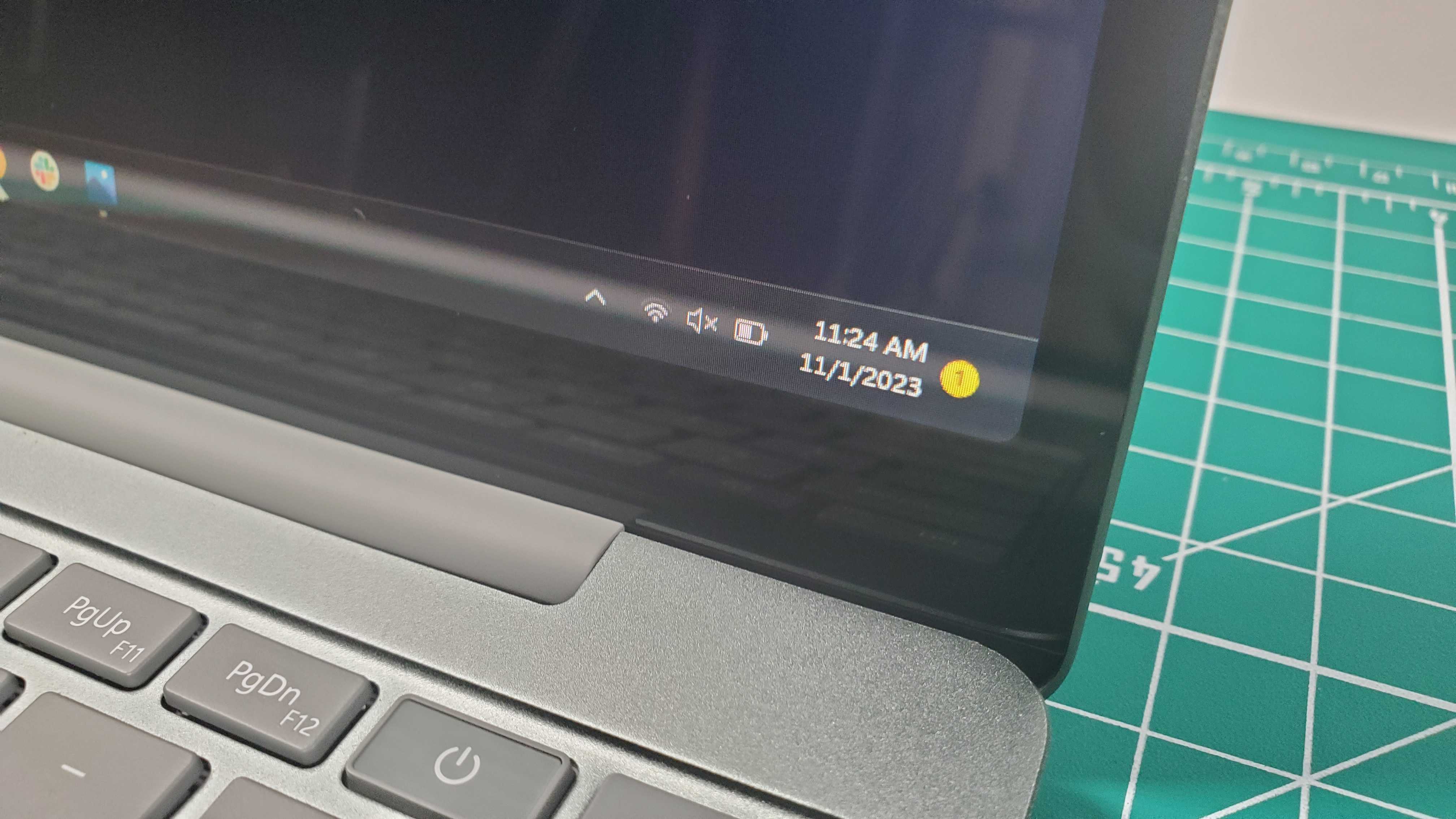

The Amazon Echo Smart Frames (3rd gen) , which Amazon announced in September of 2023, are available in a prescription-ready model, as sunglasses, and in a more expensive blue light filtering model. There are five styles, including Blue Round, Black Rectangle (my review unit), Brown Cat eye, and Gray Rectangle.

While the smart glasses were set to retail for $269.99, Amazon is currently offering a $75 discount, which brings them down to $194.99. Amazon has yet to say when the "introductory period" ends.

Blue light filtering models will start at $299.99 but with the introductory discount will run you $224.99.

The sunglass model is set to retail for $329.99 but comes in at $254.99 with the introductory discount.

If you demand even more style, you can opt for the Carrera Smart Glasses with Alexa, which starts at $314 with the discount. They will eventually retail for a more eye-watering $389.99 (ah, the price of fashion). The Carrera models do not support subscription lenses.

While none of the Echo Frames ship with a power adapter (just the cable), you do get a very chic, collapsible case. The case would be even cooler, though it it could hold a little battery charge.

All of these frames are set to ship on December 7. For now, they're only available in the US.

- Value score: 4/5

Amazon Echo Frames (3rd Gen): Design

- Nearly normal eyeglass looks

- Lightweight and comfortable

- 4 hours of battery at max volume

With the Echo Frames (3rd Gen), I think Amazon got the brief: do as much as possible to make these smart glasses look and feel like normal, analog eyeglasses.

I purposely started wearing them without warning my wife who did a double-take and asked me what I was wearing. She quickly gleaned they were smart glasses because, what else would I be wearing, and walked away shaking her head. An hour or so later she looked at me again and seemed to reconsider, telling me that she could no longer decide if she liked them on me or not. I consider this progress.

Sure, the stems, which house the mics, speakers, buttons, processor, and batteries are easily twice as thick as the stems on my traditional glasses, making it nearly impossible to fold them fully flat, but the front of the frames is indistinguishable from dumb glasses. Button placement, which puts a volume rocker on one stem and a pair of pause, play, and skip action buttons on the other is inconspicuous enough. Still, I soon found it more efficient to use Alexa for controlling things going volume, play, pause, etc. Pressing both Action Buttons also powers up the frames and prepares them for pairing

Before I could wear and use the Echo Frames, I had to charge them. The smart eyewear ships with a custom USB-C-based charger which is probably the weakest link in this package (Amazon supplies the USB-C cable but does not supply a charge adapter). It requires folding the glasses closed (an act that automatically shuts them down, just as opening them, turns them on) and jamming the stems into a narrow channel with the lenses facing up. There is nothing intuitive about this and I am certain consumers will get it wrong (as I did on one occasion) and end up not charging their Echo Frames. There's an indicator light on the stand that tells you when the frames are fully charged.

The frames charged pretty quickly (once I properly seated them on the charging base). After that, I powered them up and paired the frames with the Alexa App on my iPhone 15 Pro Max (the Echo Frames will work with any Android phone running Android 9.0 or higher and any iPhone running iOS 14.0 or higher).

Beyond a constant connection to your Frames, the Alexa App provides several other features and customizations. If I set location tracking to stay on constantly for the app, I could use it to find my Amazon Echo Frames.

Even without the app, pairing the Echo Frames with my iPhone turned them into the default Bluetooth speakers, which meant whatever I played on my phone would playback through the Echo Frames.

- Design score: 4/5

Amazon Echo Frames (3rd Gen): Specs

Amazon Echo Frames (3rd Gen): Audio

- Clear, head-filling audio

- No real bass to speak of

- No one notices you're listening to something (unless you turn the volume way up)

- Environmental sounds overcome audio

There is something nice about having music and podcasts ready to go even if I'm not wearing my best AirPods Pro or some other in-ear audio. By melding high-quality audio with the glasses I'd wear every day, there's one less thing for me to carry and worry about. To Amazon's credit, the stereo audio quality coming from the two micro speakers positioned in the stems and near each temple is clear, warm, and can get quite loud.

I enjoyed listening to tracks selected for me by Amazon Music, podcasts, news reports, TikToks, and Alexa's answers to my numerous queries.

Still, these aren't true over-the-ear speakers which means they'll struggle to produce any kind of bass beat. White Stripes Seven Nation Army sounded particularly hollow. Obviously, if you care about true audio fidelity, these frames are not for you.

For as good as the audio is in relatively quiet environments like my house, the bustling Manhattan streets almost completely overwhelmed the micro speakers and I could not yell loud enough for Alexa to hear me through the far-field microphones.

Despite being smart frames, there isn't much that's smart about the audio. There are no noise cancellation abilities, which I really would not expect here. However, I am bothered that the Echo Frames can't use its quad of far-field microphones to detect when someone is speaking to me or I start speaking and automatically mute.

Finally, I was surprised to see that Echo Frames don't know when they are on or off my face, In other words, I expected that removing the frames from my face would pause the audio. it does not. At least they instantly pause if you fold them closed.

- Audio score: 3.5/5

Amazon Echo Frames (3rd Gen): Battery

- 4-to-6 hours of battery life

Turns out it was easy to test Amazon Echo Frames' battery life since they will happily continue playing even if you don't keep them on your face. In my test, I pushed the volume to 100% (which eventually got me a warning from Apple that I had exceeded the recommended limit for audio exposure) and just let the Frames play a series of podcasts.

At full volume, I got a solid 4 hours of battery life. They're rated for 6 hours at 80% volume, which tracks with my testing. If you play the less frequently and only talk to Alexa a few times a day, you might get even better battery life.

Even so, this does not compare to your best Bluetooth headphones and if you run out of battery life, you'll have to return the eyeglasses to their charging base, there's no juice-filled case for you to drop them into and charge up for another few hours of playback.

- Battery score: 4/5

Amazon Echo Frames (3rd Gen): AI

- Alexa responds quickly to most queries

- Alexa's data, which is accessed through my iPhone and the cloud, is generally timely and accurate

- I wish it would stop asking me to unlock my iPhone

Having Alexa on my face is cooler than I thought it would be. In my house, I would whisper, "Alexa, turn on First Plug" to light up my Christmas tree, or quietly ask it for the weather or news about George Santos' status as a member of Congress. Sometimes, I'd have Alexa read my latest notifications.

Alexa is also a much more effective way of changing songs and controlling the audio volume than using the physical buttons.

Amazon's digital assistant was up for pretty much anything, except when I wanted something that could only be accessed by me unlocking my phone. That was annoying and defeats to the purpose of a hands-free wearable assistant.

Generally, I was pleased that I could have this quiet little relationship with my wearable Alexa and, for the most part, no one was the wiser, or at least no one felt comfortable calling me out for talking to my eyeglasses.

- AI Score: 4/5

Should I buy the Amazon Echo Frames (3rd Gen)?

Buy them if...

Don’t buy them if...

Also consider

How we test

To test the Amazon Echo Frames (3rd Gen), I wore them as often as I could: at work, at home, during my commute. I spoke to Alexa whenever I could and listened to a lot of music, podcasts, TikTok, and other audio. Due to time constraints, I did not get prescription lenses put in.

I've been testing and writing about technology for over 30 years.

- First reviewed December 2023